BACK

Vocal Collision - An Interactive Acapella Performance (Draft)

Abstract, Intro, Related Works, and part of Methodology

Andy Cabindol

March 4th, 2026

Link to the Google Doc version of this writing

Abstract

Vocal Collision is an interactive audio-visual performance experience that blurs the barrier between performers and audience members through the medium of acapella. By using live vocals as an input for projection animations, audience members are invited to actively participate in the concert through arm movements and hand gestures, challenging the idea of plain consumption. This project seeks to answer the question of how might audience members interact with live singing performances, and how to visualize vocal harmony in real-time to folks that aren't familiar to music theory.

To achieve this, traditional live acapella performances and light art installations were studied. However instead of complicated stage lighting with timed effects, four projectors oriented to cover four walls in 360-degrees are used to provide visual feedback when audience members lift their hand over their heads. While someone raises their hand, a glowing light is projected and a beautiful harmonic chord is played according to the chord progression of the live acapella performance.

The success of the experience was evaluated through a combination of observation of audience engagement and reactions, as well as a survey at the end of the experience. Audience members found the interaction between acapella performance and their participation with light projections mesmerizing, encouraging them to experiment with pitch and volume. While ambient noise occasionally triggered unintentional harmonies, the connection between sound and light was extremely effective.

Despite these minor sensitivity issues, Acapella Waves was deemed successful. If scaled for larger venues, this system could redefine live acapella performances by making the audience a part of the experience, and an instrument in the mix of sounds.

Introduction

Acapella as a growing medium and my attachment towards it

Acapella, derived from the Italian a cappella ("in the style of the chapel"), originated through religious song in European churches during the 15th and 16th centuries, where human voices were used as main instruments. The genre has since evolved to modern pop arrangements. I joined an acapella group called NYU Vocollision as the beatboxer during the Fall of 2022. Since then, my creative projects have always involved sound and music. This project selects the medium of acapella specifically for its human intimacy during performance, and for the love that I have for NYU Vocollision which I am now president.

The shift toward interaction

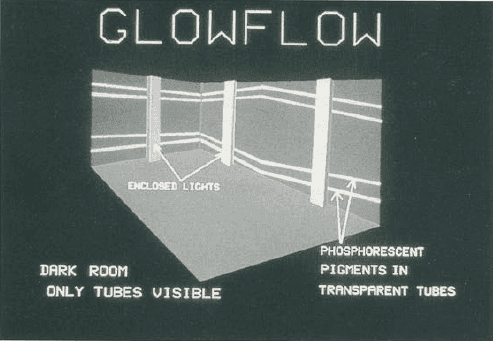

The term "breaking the fourth wall" has been used to describe a relationship in performance where the artist addresses the audiences and . However, the rise of interactive art in the 20th and 21st centuries, notably spearheaded by figures like Myron Krueger and studios like TeamLab, has opened a whole new form of consuming art: active participation. Take Glowflow by Myron Krueger, one of the earliest interpretations of interactive art installations where light beams would illuminate based on where audience members stepped.

Modern audiences are no longer restricted to just watching performance, they can interact with the work. A more recent example of this concept would be Jacob Collier's Audience Choir performances where he conducts sections of the crowd to sing certain notes, rhythms, and timbre's to perform an arrangement live. These interactive arrangements are highly engaging and easy to gain traction on social media such as Instagram Reels and TikTok.

Why combine acapella and interaction?

With Collier's audience choir interaction as a north star, I hope to recreate this same feeling of contribution, community, and joy with Vocal Collision. By mapping hand movements to harmonic layers, we can effectively skip the need for music theory knowledge. This interaction turns the audience into a collective harmonizer, shifting the focus from individual consumption to communal creation.

Ultimately, Vocal Collision seeks to answer a fundamental question: can we transform live acapella performance from a passive observation into an interactive, multi-sensory experience?

Related Work

As previously mentioned, when it comes to getting a whole crowd to participate in a performace, Jacob Collier is main reference point. He can turn thousands of random people into a massive, pro-sounding instrument just by waving his arms. He uses his body to tell the crowd when to go higher or lower, basically "playing" the theater like a human keyboard.

Vocal Collision is tries to capture that same moment, but instead of a conductor on stage, I’m using light visuals. I am taking inspiration of Collier's comprehensive onboarding, and the emotional environment he's able to create.

Another major influence for this project is the immersive play Playhouse Kate directed and written by Fiona Magee (NYU '26). Instead of sitting in a dark theater and waiting for the lights to go down to indicate the start of the play, the audience enters through a "club" where a fake bouncer checks audience members' IDs, a real DJ spins music, and a faux bar serves non-alcoholic drinks. Audience members can dance and meet other people before the actors start their dialogue.

The level of immersion and participation is one that I plan to attempt to replicate with Vocal Collision. Its participation needs to be seamless and easy enough to where onboarding is smooth and the interaction is easy and addicting.

Gateway is a light installation that project parallel planes of lasers. By using mist to reveal "sheets" of light, the Lachlan creates a tangible medium that participants can wave their hands through. This is the primary interaction for Vocal Collision, however, since mist and fog are highly restricted, I plan to simply use projections on walls rather than a misty layer to wave your hand through.

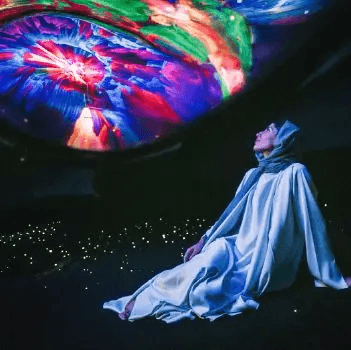

These next three work references are from AYA Universe, an immersive park where every room reacts to you in a way that feels organic, almost like you’re interacting with nature rather than code. I had the pleasure to experience AYA Universe when visiting Dubai in 2024.

Flora is a light and sound garden featuring bunches of translucent grass-like wire showing different sets of colors. While mainly a visual experience, participants can run their hands through the textured bunches of wire to interact with the light

Outland is an interactive blob visual that invites participants to control colors, shapes, and sounds with their hand movements.

Drift, the final experience of AYA Universe is a comforting sound bath with soft cushions and colorful visuals. A chord-like hum plays throughout the room to create a soothing energy. I plan to take inspiration of the visuals and audio experience - Vocal Collision is meant to feel satisfying.

Methodology

In order for Vocal Collision to be a successful concert experience, several processes will run in parallel:

1. Music Arranging & Rehearsal

The foundation of the project is the acapella group. I am choosing the arrangement to be Moon River by Composed by Henry Mancini with lyrics by Johnny Mercer, arranged by Trem Ampeloquio. This is because there is a bass voice present throughout the entire arrangement which makes it easier to identify the chord. This will be used later on for real-time audio harmonization.

Instead of practicing with a click track, the group of 10 vocal performers of different voice parts (Bass, Tenor, Alto, Mezzo, Soprano) are to meet 3x/week for rehearsal to lock in intonation and musical expression.

2. Audience Hand Tracking (The Kinect)

I’m mounting a Kinect high above the crowd to get a top-down view of the room. Instead of tracking individual hands, I’m using Depth-based Blob Tracking where the system looks for peaks in the depth map (a hand being raised), and sends their coordinates (X and Y) to the software.

3. Real-Time Audio Harmonization

Each mic input from the stage is fed into Ableton Live, with the bass mic being the main driver for the harmonization chord. Using TDAbleton, the hand-tracking data from the Kinect acts as a fader. If no hands are up, the harmonies are muted. As hands rise, the system gradually unmutes the harmonizers and shifts their pitch based on the current bass note as well as which zone the participant's hand is in. This creates a real-time, multi-voice choir that follows the audience's lead.

4. Visuals in TouchDesigner

The 4-wall projection acts as the visual interface for the concert. I’m using TouchDesigner to generate waves of light. When the Kinect detects a hand, a pulse of light appears on the wall exactly where that person's hand is. This gives the participant immediate visual confirmation that they are the ones controlling the sound.

The visuals are programmed to react to the frequency and volume of the singers, so the walls look like they are vibrating along with the voices.

The Performance

Vocal Collision will take place in a live, immersive concert held on April 25th at the 370 Jay St. Audio Lab. This venue was specifically chosen for its advanced audio equipment, allowing the four-wall projection and the multi-channel vocal setup to be fully realized.

During the performance, a 10-person acapella group will perform "Moon River" while the audience of approximately 40 participants is encouraged to move freely within the environment. The event is designed to feel less like a formal recital and more like a sound garden where the boundaries between the stage and the floor are blurred.

To measure the success of Vocal Collision, I will be using two main methods to see how well the interaction actually worked:

During the performance, I’ll be observing the audience's engagement levels. I’m looking for behavioral onboarding: how quickly people realize their hands are triggering the light and how long they stay engaged.

Immediately following the experience, participants will be asked to complete a short survey. The goal is to gauge their satisfaction and their ability to express themselves musically. For example, I want to know if they felt like they were truly playing the choir or if the interaction felt random. Key questions will focus on the clarity of the visual feedback and the emotional impact of the interactive performance.

…

Collier, Jacob. "Jacob Collier - The Audience Choir (Live at O2 Academy Brixton, London 2022)." YouTube, uploaded by Jacob Collier, 3 Aug. 2022, www.youtube.com/watch?v=3KsF309XpJo.

Playhouse Kate. Directed by Fiona Magee, 2025. Instagram, @playhousekateplay, www.instagram.com/playhousekateplay/.

Blanchard, L., Holt, C., & Paradiso, J. A. (2025). AI Harmonizer: Expanding Vocal Expression with a Generative Neurosymbolic Music AI System. arXiv:2506.18143

Source: https://arxiv.org/abs/2506.18143

This paper talks about musical augmentation, allowing a single performer to sound like a full ensemble through low-latency voice modeling. For my purposes, this serves as the proof that a Harmonizer framework is successful to take the live acapella soloist’s current pitch and generate a "wave" of harmonies that are musically aligned with the live performance. The audience's hand position (height or distance) can be mapped to the "density" or "spread" of the four-part harmony described in the paper allowing the audience to adjust the timbre in real-time.

White, Gareth. Audience Participation in Theatre. Springer Nature Switzerland, 2024.

Source: https://doi.org/10.1007/978-3-031-69888-0

This study of audience participation in contemporary performances explores the concept of "participatory agency", which is the moment an audience member moves from an observer to an active contributor. White analyzes how the invitation to participate must be designed so that the audience feels safe yet influential, and how the physical space dictates the level of engagement. This study provides the physical and onboarding requirements for my performance. I will use White’s theories to design the invitation to Acapella Waves by considering making a clear impact through real-time harmony and creating what White calls a "liminal threshold", a physical border that, once crossed, changes the state of the performance. This book helps me justify the use of the laser as more than a gimmick; it is a boundary that separates the "audience space" from the "creative space," making the act of interacting with the laser a deliberate, performative choice.

Turczan, Lachlan. Gateway. Manar Abu Dhabi (Public Art Abu Dhabi), 2025.

Source: https://paad.ae/manar/artwork-detail/gateway

Gateway is a light installation that project parallel planes of lasers. By using mist to reveal "sheets" of light, the Lachlan creates a tangible medium that participants can wave their hands through. This is the primary visual for my work. It demonstrates how to create a "physical" boundary using light and mist. While Gateway focuses a painterly quality of light in wind, Acapella Waves adapts this "misty laser plane" aesthetic to create an interface.

Marshmallow Laser Feast. Laser Forest

Marshmallow Laser Feast, specifically their "Laser Forest" uses vertical laser beams that, whed moved or tapped by the user, satisfying music notes are played. This source serves as the "Proof of Concept" for the interaction. It proves that a laser beam can be mapped to a MID play audio in real-time. While I most likely won't use MIDI in my project, I will use the the lightweight interaction as a basis to how likely the audience wants to add a specific acapella layer to the live performance (e.g., bass, percussion, or harmonies).

Mori, Daichi (Alvie Starr). Audio Visualization and String Interaction Series.

Sources: alviestarr.com / Patreon: Reference Audio Visualizations

Daichi (Alvie Starr,) Mori is a design technologist who focuses on sound and light. The specific project referenced involves how light movement reacts to the physical properties of a vibrating string. Using real-time audio analysis, Mori maps the amplitude and frequency of the guitar to the displacement and oscillation of a laser beam. I would use this project's sense of brightness and movement in Acapella Waves. The organic movement the projection invokes a feeling of intelligence, one that I hope to recreate in Acapella Waves.